I’ve found the requests library to offer the easiest and most versatile APIs for common HTTP-related tasks. Final Thoughtsĭownloading files with Python is super simple and can be accomplished using the standard urllib functions. Note: The wget.download function uses a combination of urllib, tempfile, and shutil to retrieve the downloaded data, save to a temporary file, and then move that file (and rename it) to the specified location. The wget Python library offers a method similar to the urllib and attracts a lot of attention to its name being identical to the Linux wget command. That’s beyond the scope of this tutorial. Note: downloaded files may require encoding in order to display properly. This is a directive aimed at web browsers that are receiving and displaying data that isn’t immediately applicable to downloading files. The downloaded data can be stored as a variable and/or saved to a local drive as a file. When a web browser loads a page (or file) it encodes it using the specified encoding from the host.Ĭommon encodings include UTF-8 and Latin-1. Python: Download File from URL & Save Posted on Januby admin A Python can be used to download a text or a binary data from a URL by reading the response of a. There are some important aspects of this approach to keep in mind-most notably the binary format of data transfer. Instead, one must manually save streamed file data as follows: import requests However, it doesn’t feature a one-liner for downloading files. The Python requests module is a super friendly library billed as “HTTP for humans.” Offering very simplified APIs, requests lives up to its motto for even high-throughput HTTP-related demands. In other words, this is probably a safe approach for the foreseeable future. Note: urllib is considered “legacy” from Python 2 and, in the words of the Python documentation: “might become deprecated at some point in the future.” In my opinion, there’s a big divide between “might” become deprecated and “will” become deprecated.

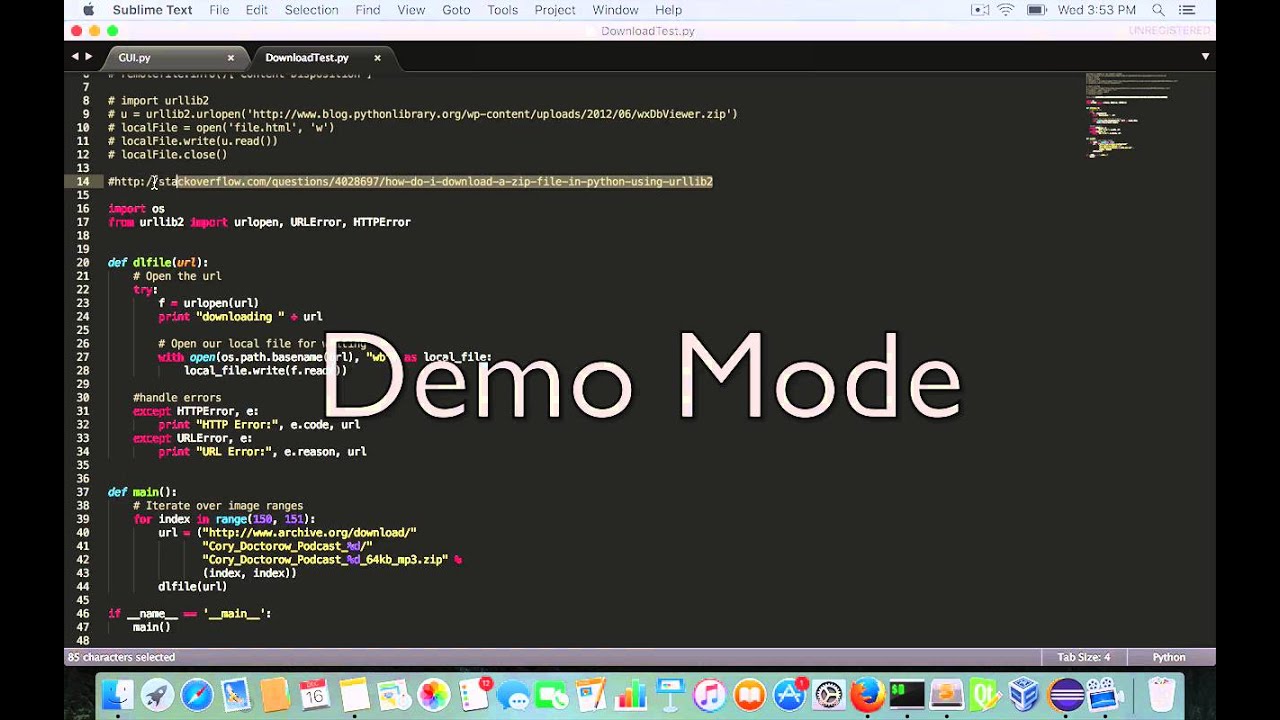

Let’s consider a basic example of downloading the robots.txt file from : from urllib import request This includes parsing, requesting, and-you guessed it-downloading files. Pythons’ urllib library offers a range of functions designed to handle common URL-related tasks. This article outlines 3 ways to download a file using python with a short discussion of each. Results = ThreadPool(5).Other libraries, most notably the Python requests library, can provide a clearer API for those more concerned with higher-level operations. Each call will take the next element in urls list import requestsįrom multiprocessing.pool import ThreadPool However, this puts substantial load on the server and you need to be sure that the server can handle such concurrent loads. Also note that we are running 5 threads concurrently in the script below and you may want to increase it if you have a large number of files to download. Without the iteration of the results list, the program will terminate even before the threads are started. Note the use of results list which forces python to continue execution until all the threads are complete.

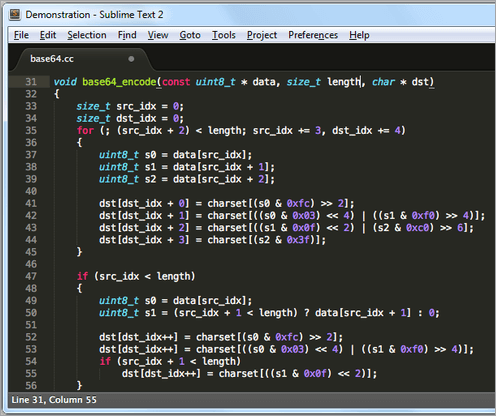

Python download a file how to#

The following python program shows how to download multiple files concurrently by using multiprocessing library which has support for thread pools. The download program above can be substantially speeded up by running them in parallel. # if url is abc/xyz/file.txt, the file name will be file.txt However the download may take sometime since it is executed sequentially. The following python 3 program downloads a list of urls to a list of local files. # the file name at the end is used as the local file nameĪfter running the above program, you will find a file named "posts" in the same folder where you have the script saved. # if the url is, the file name will be file.txt # assumes that the last segment after the / represents the file name The following example assumes that the url contains the name of the file at the end and uses it as the name for the locally saved file. The following python 3 program downloads a given url to a local file. You may need to prefix the above command with sudo if you get permission error in your linux system.